The code on this page is placed in the public domain with the hope that others will find it a useful starting place for developing their own software. Most is not well-documented nor thoroughly tested.

Read about a MATLAB implementation of Q-learning and the mountain car problem here. That page also includes a link to the MATLAB code that implements a GUI for controlling the simulation.

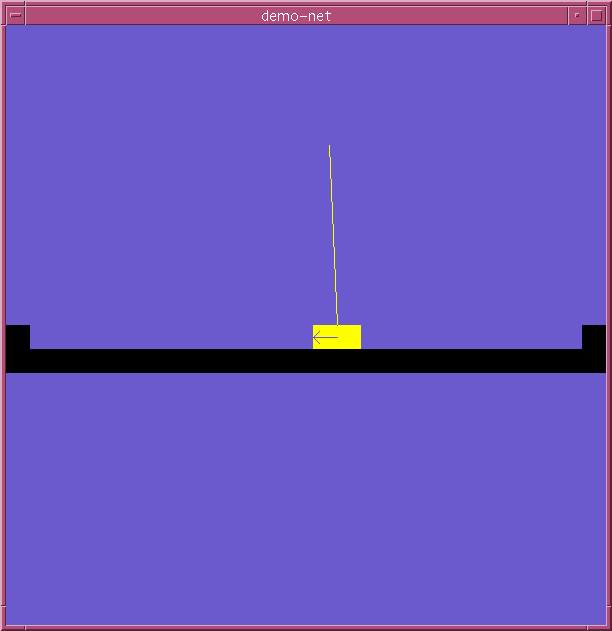

Want to try your hand at balancing a pole? Try one of the following. The most recent version is first.

Here is code for learning to balance a

pole, used for experiments described in Strategy

Learning with Multilayer Connectionist Representations, by

C. Anderson, in the Proceedings of the Fourth International

Workshop on Machine Learning, Irvine, CA, 1987. It includes C code

and a README explaining how to compile it and run it. You may run the

demo executable to try to balance the pole with the

mouse, or run-demo-net to demonstrate the training of the

neural network to balance the pole. The graphics display requires X

windows. Here is a screenshot:

train.c is a C program for training multilayer,

feedforward neural networks with error

backpropagation using early stopping and cross-validation. The program

includes the option of training the networks on a CNAPS Server (see the

section above on Parallel Algorithms). The results are written to

stdout in either

short format or long format. If in short format, the results can be

summarized and sorted by summshort.awk, an

awk script. If in long format, use nnlong-to-short.awk to first convert the

file to short format.

This tutorial in postscript describes how to

use the train.c program and awk scripts. It also describes how

to run train.c from within Matlab using functions described below.

Since much of the work in any neural network experiment goes into data

manipulation, we have written a suite of Matlab functions for preparing data,

launching the train.c program, and displaying the results.

For starters, here is nnTrain.m, a function that

accepts arguments like the name of the data matrix, writes data files to be

read by the train.c program, and starts a background process

running the train.c program. This has evolved to include many

features we find handy, such as running remotely on another machine, including

on our CNAPS Server. As mentioned above, this tutorial in postscript describes how to

use train.c, nnTrain.m and other Matlab functions

mentioned below. The LaTeX source file is

available as an example for inexperienced LaTeX'ers.

Also, a compressed tar file is

available containing the LaTeX source and figures.

In addition to summarizing the output of train.c with the awk

scripts, you may plot histograms of test error for each set of

parameter values included in the short format output file using the Matlab

functions nnRuns.m, to load into Matlab a

matrix containing results of all runs, and nnPlotRuns.m to display one histogram for each

set of parameter values. nnRuns.m needs meanNoNaN.m.

Long format output includes information for learning curves, network responses to test data, and the best weight values for each training run. These can be extracted from the output file and displayed within Matlab using nnResults.m. nnResults calls these Matlab functions: nnParseResults.m, nnPlotCurve.m, nnPlotOuts.m, nnPlotOutsScat.m, nnShowWeights.m, nnDrawBoxes.m, fskipwords.m.

The above Matlab code is being modified to be in an object-oriented form using Matlab 5.

rfir.m is a Matlab function for training recurrent networks using a generalization of Williams and Zipser's real-time recurrent learning modified for networks with FIR synapses, based on the work of Eric Wan. An example of its use is in xorrfir.m that trains a recurrent network to form the exclusive-or of two input bits.

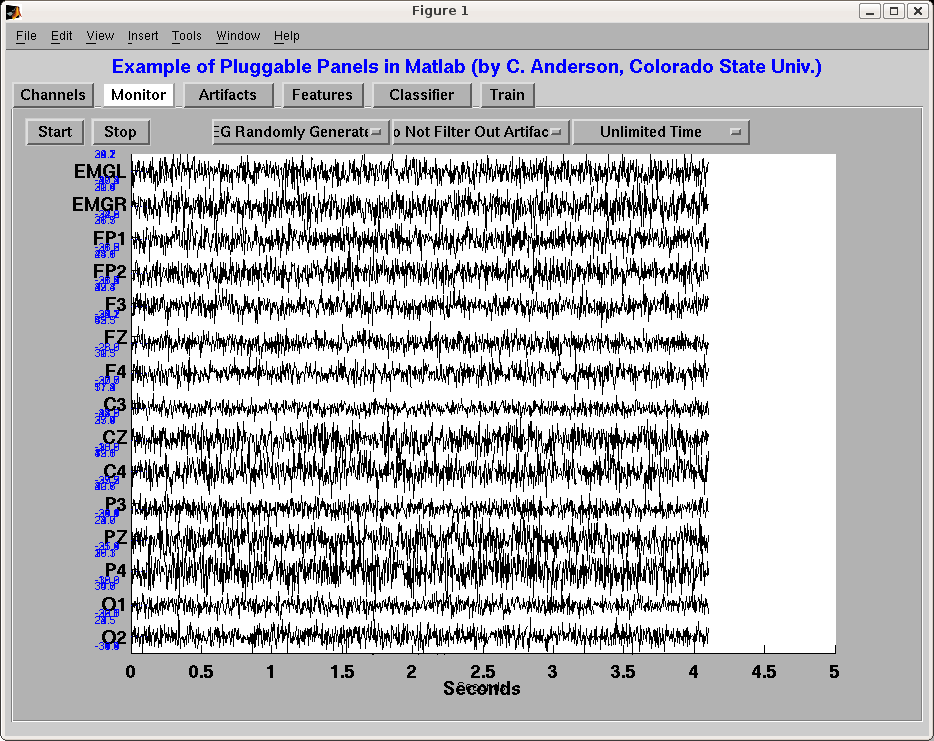

We have written some code that implements tabbed panels for Matlab. The implementation makes it very easy to add additional panels to an application. It can be downloaded here as pluggablePanels.tar.gz. It includes a README file and a subset of files needed for the example application of an interface for an EEG recording system. Here is a screenshot: